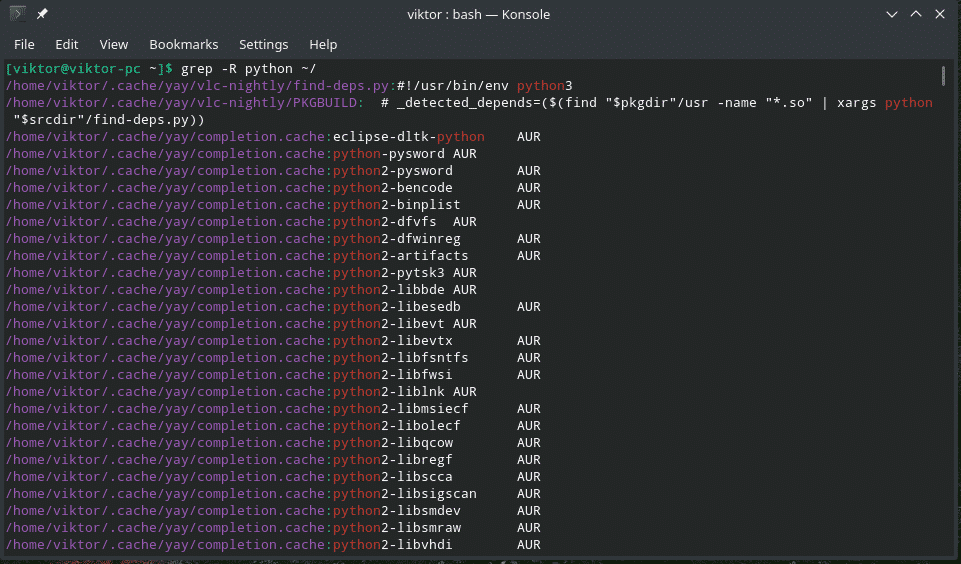

Unix grep(1) manual page at man.cat-v.GNU grep user's manual as one page at gnu.org.Release announcements of GNU grep are at a savannah group.Ī changelog of GNU grep is available from .Ī version of GNU grep for MS Windows is available from GnuWin32 project, as well as from Cygwin. The -f filename option on grep is used to specify a file that contains patterns to match, one per line. Old versions of GNU grep can be obtained from GNU ftp server. or you can be a little lazy and just type, grep -f /tmp/cr. Versions An example of GNU Grep in operation. Not really a grep example but a Perl oneliner that you can use if Perl is available and grep is not.perl -ne "print if /\x22hello\x22/" file.txt.Regular expression features available in grep include *. Grep covers POSIX basic regular expressions (see also Regular Expressions/Posix Basic Regular Expressions). Grep uses a particular version of regular expressions different from sed and Perl. Unix grep(1) manual page at, DESCRIPTION section.2.1 Command-line Options at grep manual, gnu.org.-regexp=pattern, in addition to -e pattern.-o: Output the matched parts of a matching line.Ĭommand-line options aka switches of GNU grep, beyond the bare-bones grep:.-s: Suppress error messages about nonexistent or unreadable files.-h: Output matching lines without preceding them by file names.-b: A historical curiosity: precede each matching line with a block number.-n: Precede each matching line with a line number.-c: Output count of matching lines only.* which can stand for anything in a file's name and \(txt\|jpg\) which yields either txt or jpg as file endings.Ĭommand-line options aka switches of grep: "Fort Worth, TX", "Ft.The regex here is made up from. "^west*lia*tx|^W*phalia*TX", "Temple, TX" Ltbl$Location_Pattern <- tolower(ltbl$Location_Pattern)Ĭtbl$Customer_Location <- tolower(ctbl$Customer_Location)Ĭlass(ltbl$Location_Pattern) <- "Expression" I've done additional processing to cbtbl to change all Customer_Location to lowercae and even re-classed the vector as Expression, but nothing seems to work… However, I'm finding a lot of "dirty" locations, so rather than exact match, I need to add regular expression wildcards to the Customer_Location to compensate for typos and abbreviations entered by customers when they entered "Customer_City" but the "Customer_State" will always be good due to dropdown selection by customers. # This adds a new column with location of hub to ctbl which will later be joined to another matrix for additional analysis based on regional hubs.Ĭtbl$Customer_Hub <- ltbl$Cleansed_Hub # Customer_Location is a concatenation of 'Customer_City' and 'Customer_State' in a prior procedureĬtbl <- 'database extract # 3 columns (doc_id, Customer_ID,Customer_Location) Ltbl <- readtext(lfp) # 2 vectors (Location_Pattern,Cleansed_Hub) Lfp <- "D:/Libraries/Documents/Survey/Location Cleanse List.csv" This works fine when I’m using exact match, for matching various locations near a distribution hub.

I need to do a pattern match based on a 2 column list. The below is what I posted to Stack overflow in hopes of finding a solution. Ideally when I run this, a new column will be joined to my source data frame with the correct term. I’ve experimented with trying to come up with a solution using a table of regular expression wildcards, to include ‘or(|)’, ‘and(%)’ & ‘not(!)’ so that when there’s a match, the second column has a standardized term to be used. I watched the above and a previous vido where you did some extractions for homicides by shooting in Baltimore.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed